From Compliance to Competitive Edge: Reimagining RoPA as the Foundation of AI Strategy

Most organizations don’t struggle with AI ambition.

They struggle with AI delegation.

Privacy leaders are being asked to move faster, enable AI adoption, and support innovation, while maintaining defensibility, documentation, and regulatory alignment. Every new tool raises familiar questions:

- Who owns this processing activity?

- What data categories are involved?

- What level of risk does it introduce?

- Who remains accountable if something goes wrong?

The pressure to “do AI” is accelerating. But without a structural framework, AI decisions are often driven by a mix of urgency and caution. Some teams automate prematurely. Others resist entirely. Few operate from a shared, documented map of responsibility.

This is not primarily a technology challenge.

It is an operational one.

To move beyond experimentation, AI must be treated not as a standalone capability, but as organizational intelligence, embedded into workflows, mapped to ownership, and aligned with risk exposure.

The foundation for this already exists inside most enterprises.

It is the Record of Processing Activities (RoPA).

The Delegation Problem: What Should Actually Run Autonomously?

When people hear “autonomous AI,” they often imagine systems acting independently. In enterprise environments, autonomy is something far more practical.

Autonomy is structured delegation.

AI can execute repeatable, signal-driven tasks continuously. Humans must retain responsibility - judgment, escalation authority, and ultimate accountability.

The real question is not “Can we automate this?”

It is “What level of autonomy does this activity deserve?”

Effective AI governance separates work into two layers:

- Execution - high-frequency, logic-driven tasks

- Responsibility - ownership, review, and accountability

When these layers are clearly defined, automation scales safely. When they are blurred, organizations either over-constrain AI or expose themselves to unmanaged risk.

Most AI strategies stall precisely because this separation is never made explicit.

AI strategy is not a technology roadmap.

It is a delegation architecture.

Why RoPA Is the Missing Blueprint

RoPA is typically viewed as a regulatory requirement, something maintained for audit readiness.

But operationally, it is far more powerful.

RoPA is the only structured artifact in the enterprise that connects:

- Systems and infrastructure

- Processing purposes

- Data categories

- Access controls

- Ownership

- Risk exposure

In other words, RoPA already maps how work actually happens.

It documents where execution occurs, who owns it, what data is involved, and where risk is concentrated.

When AI strategy is layered on top of this map, the conversation changes.

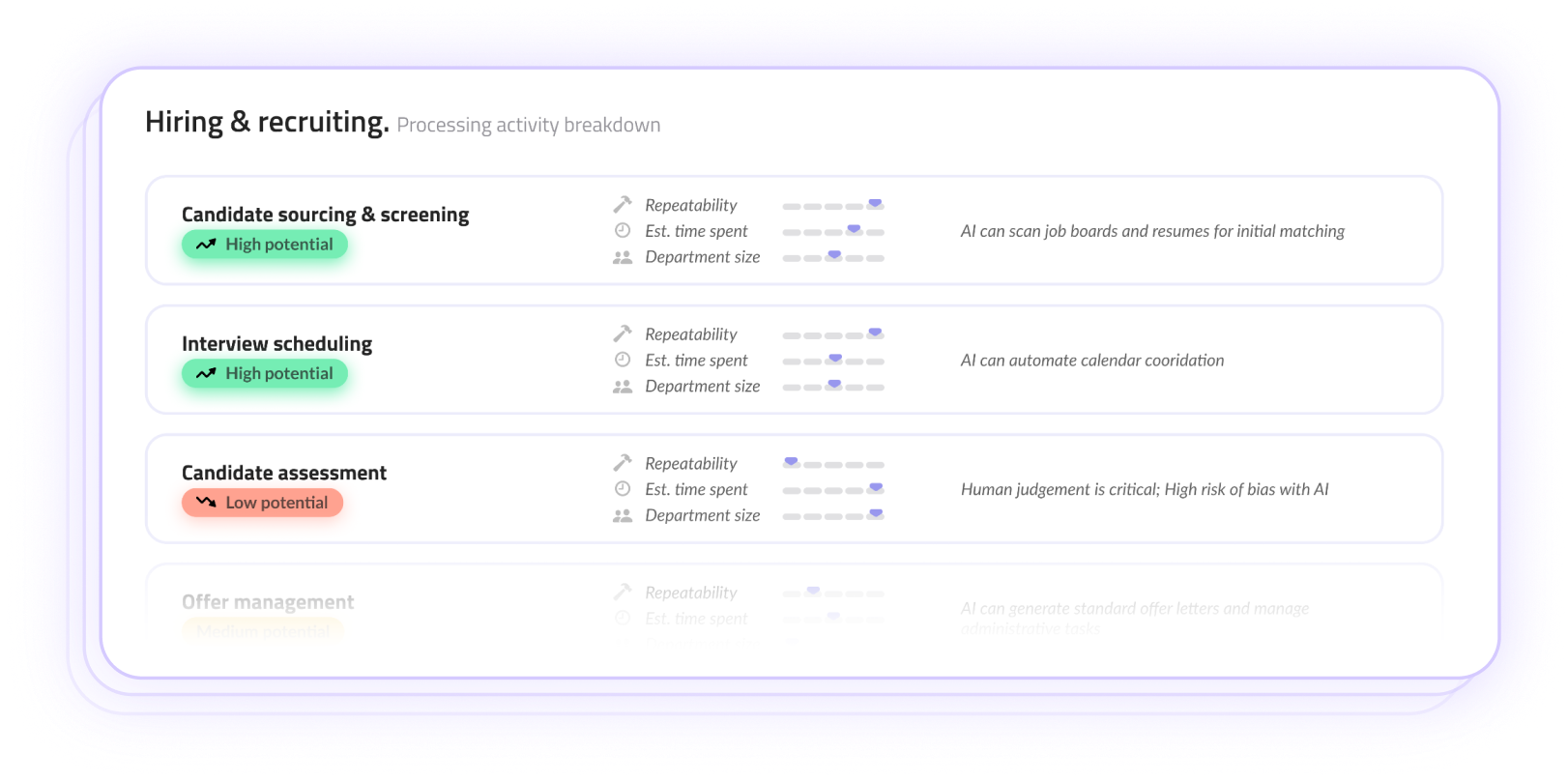

Instead of brainstorming generic “AI use cases,” organizations can identify autonomy candidates grounded in real processing activities:

- Which workflows are repeatable and high-volume?

- Which involve low data sensitivity and clear ownership?

- Which carry significant exposure and require assisted autonomy?

RoPA transforms AI from an abstract capability into an operational decision.

It reframes AI governance from “Should we allow this tool?” to “How should this activity be structured?”

Operational Triage: Mapping Risk to Opportunity

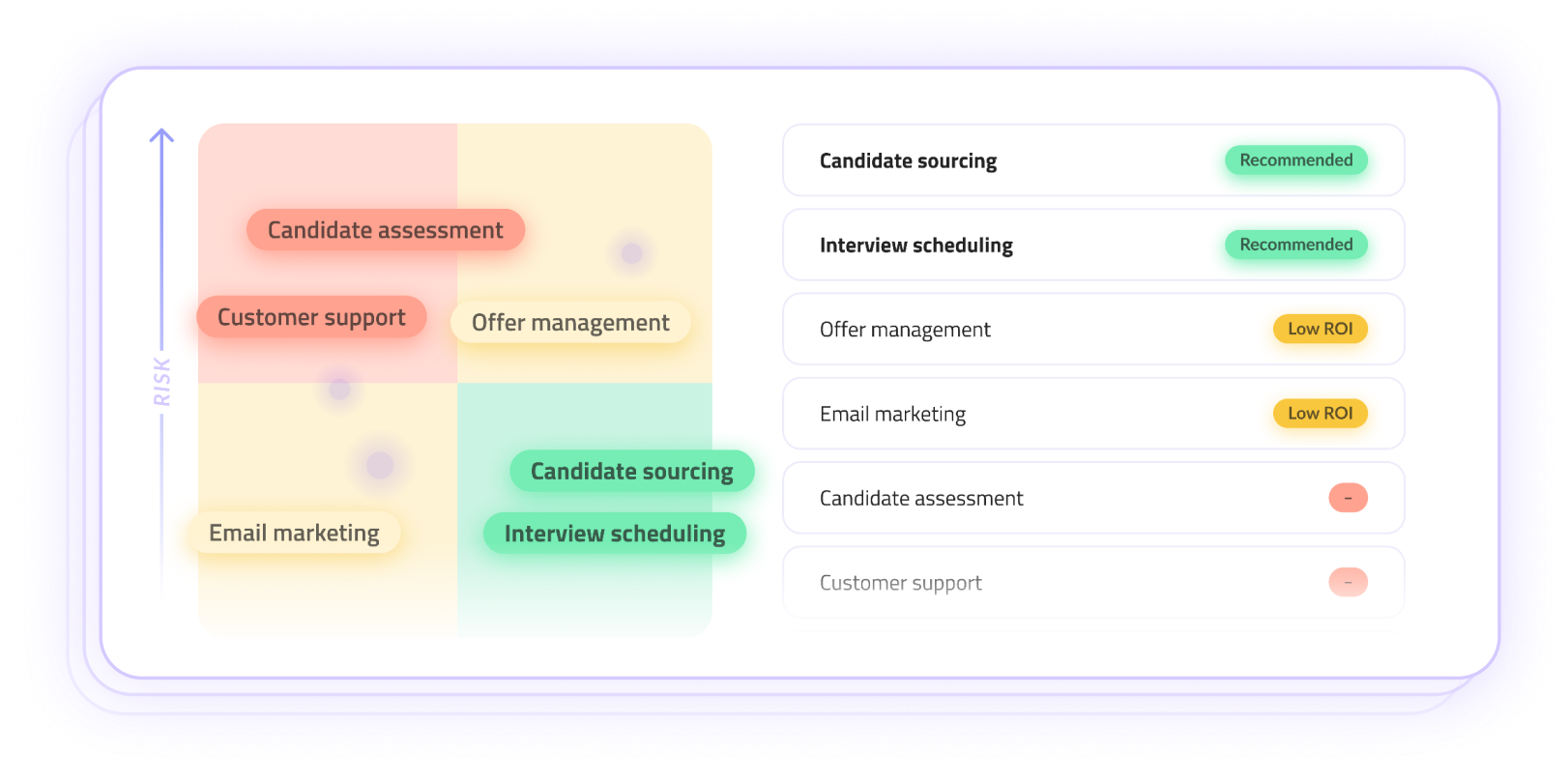

Every processing activity sits at the intersection of two forces:

- Risk & Exposure - data sensitivity, regulatory implications, potential impact

- Business Value & Opportunity - efficiency gains, scalability, strategic advantage

When these dimensions are visualized together, AI strategy becomes a prioritization exercise rather than a philosophical debate.

Low-risk, high-value activities may be strong candidates for full autonomy.

High-risk, high-value activities may require assisted autonomy and guardrails.

Low-value activities may not justify automation at all.

This structured triage allows innovation to accelerate precisely where it creates measurable value — while ensuring accountability remains explicit where exposure demands it.

Privacy teams, in this model, do not slow AI down.

They make it operable.

From Reactive Compliance to Intentional Enablement

The fundamental shift is moving from viewing AI strategy as something you “launch” to something you operate.

In traditional governance models, privacy and compliance were perceived as brakes - mechanisms designed to slow momentum and prevent missteps.

But in a structured, risk-aware operating model, RoPA becomes something entirely different.

It becomes an accelerator.

When your processing activities are mapped clearly, with defined ownership, documented purposes, and explicit risk boundaries, then autonomy is no longer reactive. It becomes intentional.

Accountability does not disappear. It becomes visible.

Instead of debating every new AI initiative from scratch, organizations can confidently green-light high-value innovation because the guardrails are already embedded into the workflow.

In this model, success is not measured by how many AI tools you deploy.

It is measured by how much manual friction you can safely remove from core processes, without compromising oversight.

Where MineOS Changes the Equation

The challenge, of course, is that most RoPAs today exist as static spreadsheets. They reflect a moment in time, and become outdated the moment systems evolve, vendors change, or AI tools are introduced.

Autonomy cannot operate on static documentation.

MineOS bridges the gap between documentation and execution.

Instead of asking teams to invent an AI governance structure from scratch, MineOS activates the structure they already maintain, their data maps, processing purposes, and ownership records, and turns them into living operational intelligence.

It continuously surfaces where AI tools are being introduced, which data categories are involved, who owns each activity, and how execution aligns with your defined Execution vs. Responsibility layers.

By providing this continuous signal, MineOS ensures AI governance remains grounded in actual processing realities.

It does not replace compliance. It operationalizes it.

With MineOS, RoPA is no longer a checklist prepared for audits.

It becomes the operating manual for your AI future.

Interested in seeing how autonomous AI Strategy works in practice?

Contact us to learn more or request a walkthrough of MineOS.